Industrial production technologies are rapidly advancing, and traditional metrology is increasingly turning to non-contact measurement techniques to keep pace. To maintain the higher throughputs required by product demands, production metrology processes are moving from the lab to the manufacturing line, which is driving the emerging trend towards Metrology in Motion™ and integrated end-to-end production enabling the rapid march towards Factory 4.0.

Companies that can enable these two key approaches will set the pace. The problem is, how? And, the solution is the Digital Twin. In this multi-part blog, we will look at data integrity and the use of digital twins in metrology. In part one, we will define the digital twin and explain how we model one, delve into surface geometry and true value measurements, and finally discuss the difference between data quality and data quantity.

What’s a Digital Twin

A Digital Twin is a set of digital data that represents a part in the real world – basically, an electronic representation of a physical part. Digital Twins can carry a range of information, from intrinsic to comprehensive, that offer significant benefits beyond just a production setting. Benefits include:

- End-to-end part traceability

- Robust qualitative and quantitative feedback to the design and prototyping processes

- Real-time (or near-real-time) feedback to the upstream work center for production adjustments

- Inline comparison of 3D point clouds to 3D design models

- In-process, in-line inspections to identify tool wear in 3D models for preventative maintenance and real-time adjustments to CNC work centers

- Support for Maintenance Repair Operations (MRO) scanning legacy non-CAD model parts and creating 3D models or using point clouds as reference.

Though once used purely in a production setting, the digital twin is increasingly being extended throughout the lifecycle of a part. In the medical implant industry, for example, end-to-end part traceability is extremely valuable, as a Digital Twin can follow the part from production all the way to the patient.

For the Digital Twin to be of any value, though, requires that the digital representation of the part be extremely precise. So how do we model a Digital Twin? Simply put, the Digital Twin is determined by the convention and parameters of the standards and metrology references being used. Our engineers create designs with tools such as SolidWorks®, that make perfect parts as a model with perfect surfaces – something that doesn’t exist in production, so we apply tolerances and accept deviations based on the capabilities of our production equipment. The key to a successful digital twin is to agree on the tools and methods, and then apply them consistently.

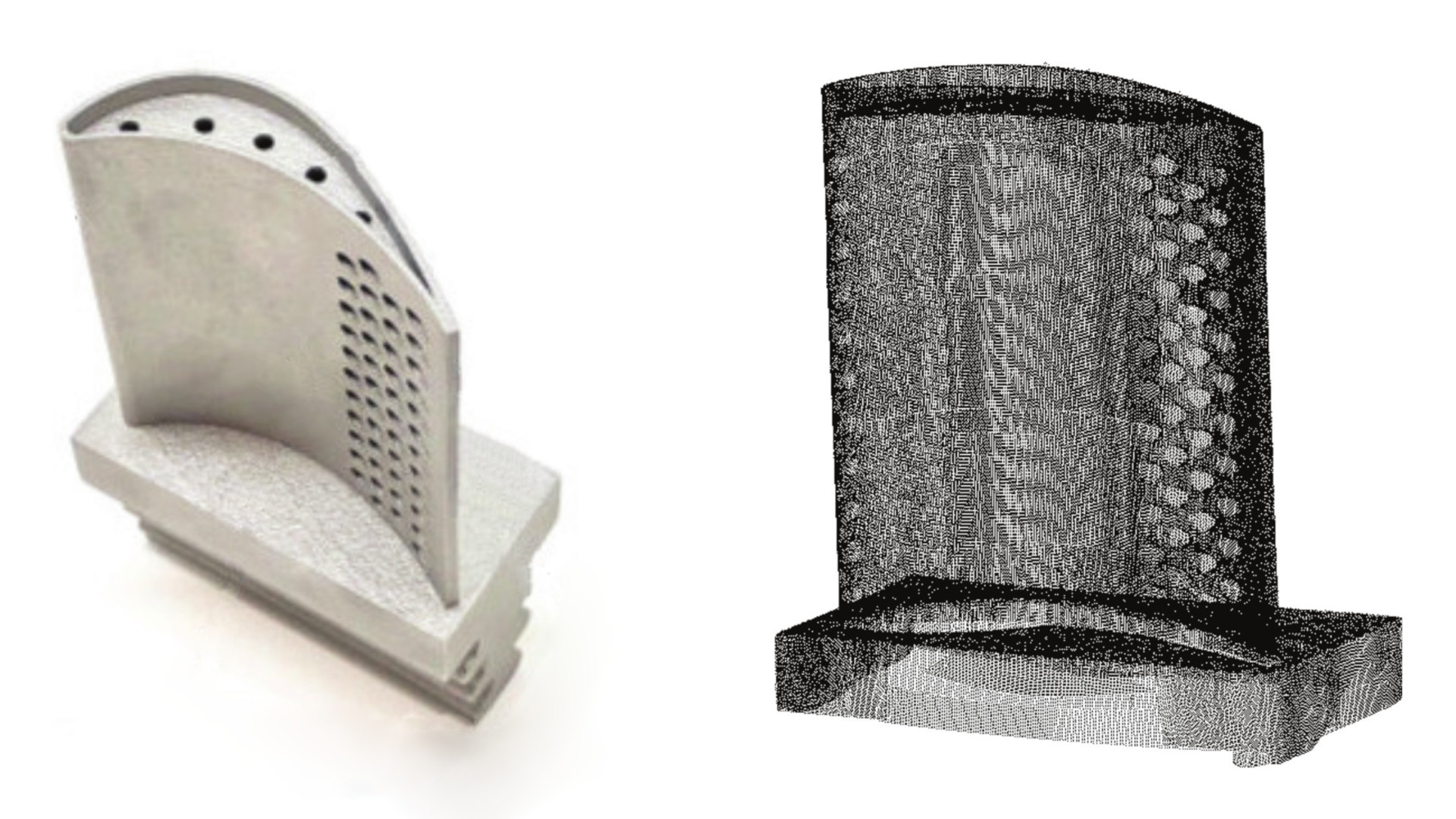

Real-world part and point cloud digital twin .

Surface Geometry and “True Value” Measurements

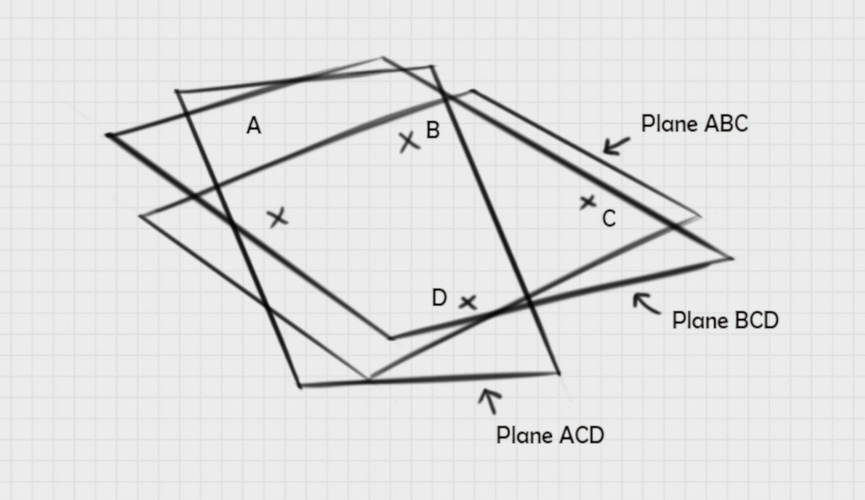

The plane is a mathematical model of a surface, or an approximation of a surface, defined by three points – two points to make a line and a third point to define the plane. This has long been the accepted method of defining a plane. What happens if we choose four points instead of three? We define three different planes, but which is a true surface? None of them, and all three of them.

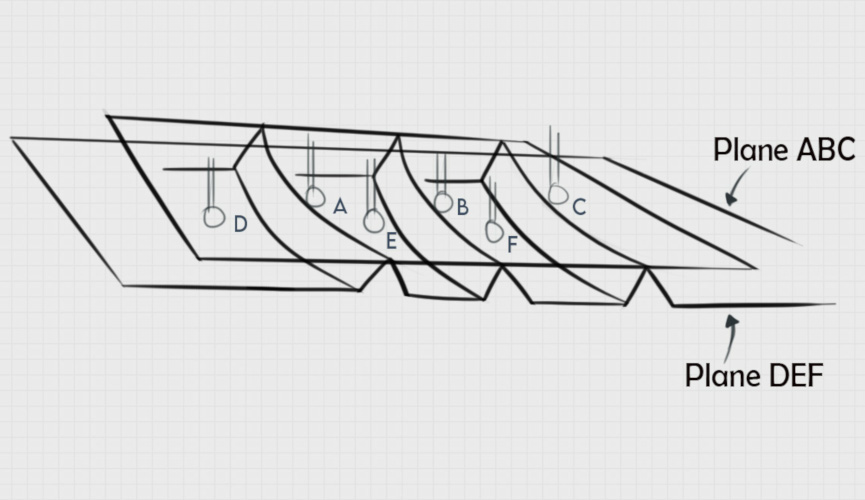

All three mathematically valid planes are models of the same surface; however, any of the three, or all of them together, are insufficient to define the surface. As illustrated in the figure below, a real part features grooves and ridges, so the calculated plane depends entirely on where you contact the surface. When a tactile probe measures the surface, you may get the three valleys – i.e., Plane DEF – while a larger diameter probe would likely hit the tops of the ridges – i.e., Plane ABC. Changing the way we collect data points changes the way we represent the model, which changes the results. Neither is right or wrong, but each is different based on the frame of reference and the data point origins.

With non-contact technologies, a dense point cloud (aka “The Digital Twin”) alleviates this measurement ambiguity brought forth by various tactile measurement systems.

Data “Q” – Quality and Quantity

Data quality is critical for the Digital Twin. When addressing data quality, the first place to start is with our instruments. Standards such as ISO 10360 have been designed to evaluate consistency across different tactile systems. Unfortunately, this standard may not be the best way of comparing tactile and non-contact tools because each system generates different errors. To account for these differences, ISO 10360 tests generate a Maximum Permissible Error (MPE) to compare systems.

Metrology is concerned with measurement error or deviations from a value considered “true”. If we compare two comparable measurement tools, the true measurement value would be accepted from the instrument with the smallest error. Generally, very few non-contact systems go through the entire ISO 10360 standardization process due to these different errors in measurements.

While many will say that tactile measurements are more accurate than non-contact, this is not necessarily true. Each technique generates errors, just different errors. Tactile methods, which capture a small number of discrete measurements, face some common errors, including the Ball Correction Error and Hysteresis Error. Non-contact systems, however, which capture a point cloud consisting of tens of thousands to millions of data points, generate different errors, which we will examine a bit more closely in part two of our journey.

Part 2 of this blog will cover examples of some tactile and non-contact errors, and how such errors are overcome with non-contact measurement techniques.